Google has announced the release of Nano Banana 2, officially named Gemini 3.1 Flash Image, its latest image generation model aimed at faster, more scalable visual creation. The new model succeeds the original Nano Banana, launched in August last year, and Nano Banana Pro, which arrived in November.

Nano Banana 2 is positioned between speed and quality, combining the advanced visual reasoning of the Pro tier with the low-latency performance of Google’s Flash architecture. According to Google, the update delivers a significantly improved price-to-performance ratio for developers building image-heavy applications at scale.

Nano Banana 2 Key Features & Upgrades

Nano Banana 2 introduces several enhancements over its predecessor:

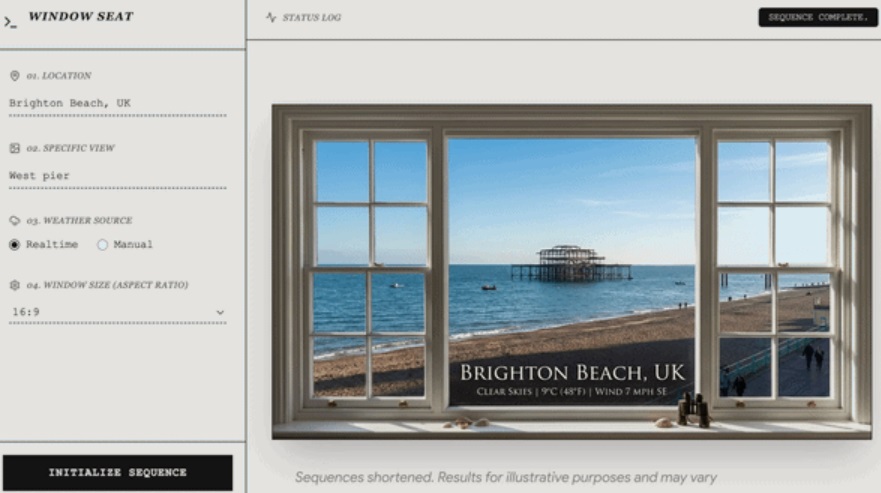

- Real-time knowledge integration: The model can reference real-world information and web search images to generate accurate visuals. Google showcased this with a “Window Seat” demo that creates photorealistic window views based on real locations and live weather conditions.

- Improved text rendering and localization: The model can generate readable, well-aligned text within images, enabling use cases such as UI mockups and marketing creatives. It also supports in-image localization. A demo called “Global Ad Localizer” shows ad visuals automatically translated and adapted for different countries.

- Subject consistency across scenes: Nano Banana 2 supports visual consistency for up to five characters and 14 objects in a single workflow. Google demonstrated this through a “Pet Passport” example, where a pet remains visually accurate across multiple global landmarks using one reference image.

- Configurable reasoning depth: Developers can control how much the model “thinks” before generating an image. Options include Minimal (default) and High/Dynamic, allowing better instruction-following for complex prompts.

- Expanded output formats: Support has been added for extreme aspect ratios such as 4:1, 1:4, 8:1, and 1:8. A new 512px resolution tier has also been introduced to reduce latency in high-volume pipelines, alongside existing 1K, 2K, and 4K outputs.

- Enhanced visual fidelity: Google says the model delivers richer textures, more vibrant lighting, and improved detail while maintaining fast generation speeds.

Google will continue offering Nano Banana Pro for workflows that require the highest factual and visual accuracy, while Nano Banana 2 is optimized for rapid generation and search-grounded tasks.

Nano Banana 2 Availability

Nano Banana 2 is now rolling out across the following Google platforms:

- Developer Tools: Available via paid API access through the Gemini API, Google AI Studio, Vertex AI, Google Antigravity, and Firebase

- Gemini App: Replaces Nano Banana Pro as the default image model across Fast, Thinking, and Pro modes; Pro and Ultra subscribers can still regenerate images using Nano Banana Pro

- Google Search: Integrated into AI Mode and Google Lens across mobile, desktop, and the Google app, expanding to 141 countries with support for eight additional languages

- Flow: Set as the default image generation model with zero credit usage

- Google Ads: Available for generating asset suggestions during campaign creation

Content Provenance & Verification

Images generated by Nano Banana 2 include Google’s SynthID watermarking, which works alongside C2PA Content Credentials to provide transparency about how content was created or modified. Google revealed that SynthID verification within the Gemini app has been used over 20 million times since its introduction in November. The company also confirmed plans to bring C2PA verification directly into the Gemini app in the near future.