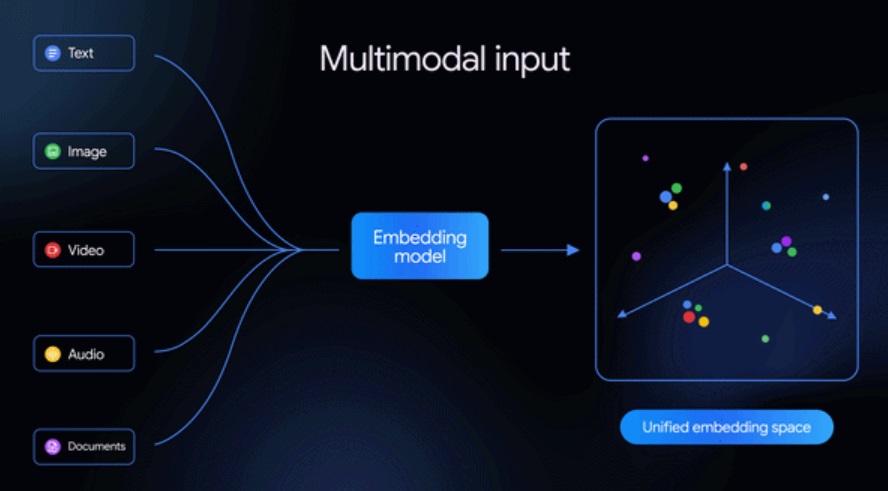

Google has announced Gemini Embedding 2, a new multimodal embedding model built on the Gemini architecture. The model is designed to process multiple types of data, including text, images, video, audio, and documents, placing them into a single unified embedding space.

Unlike earlier embedding models that focused primarily on text, Gemini Embedding 2 expands capabilities to cross-modal understanding, enabling developers to build AI systems that analyze and connect different forms of media.

The model supports over 100 languages and can power AI applications such as Retrieval-Augmented Generation (RAG), semantic search, sentiment analysis, and large-scale data clustering.

Gemini Embedding 2 leverages the multimodal capabilities of the Gemini architecture to generate embeddings across various data types. The model can process interleaved multimodal inputs, allowing developers to combine multiple forms of data within a single request.

For example, applications can analyze text descriptions alongside images or video, enabling AI systems to understand relationships between different media formats.

This approach helps developers work with complex datasets that contain multiple types of content.

Key Features

Multimodal Input Support

Gemini Embedding 2 supports a wide range of input formats:

- Text: Up to 8,192 input tokens

- Images: Up to six images per request (PNG and JPEG formats)

- Video: Up to 120 seconds per input (MP4 and MOV)

- Audio: Direct processing of audio without transcription

- Documents: Supports PDF files up to six pages

Interleaved Multimodal Inputs

The model can process multiple media types in a single request, enabling contextual understanding across inputs such as image and text together.

This capability is particularly useful for applications that require cross-media analysis and contextual search.

Matryoshka Representation Learning (MRL)

Gemini Embedding 2 incorporates Matryoshka Representation Learning (MRL), which allows embedding vectors to scale across different dimensions.

The default embedding size is 3,072 dimensions, but developers can reduce the size depending on their storage and performance requirements.

Recommended embedding dimensions include:

- 3,072

- 1,536

- 768

This flexibility allows developers to optimize performance and infrastructure costs.

AI Capabilities and Use Cases

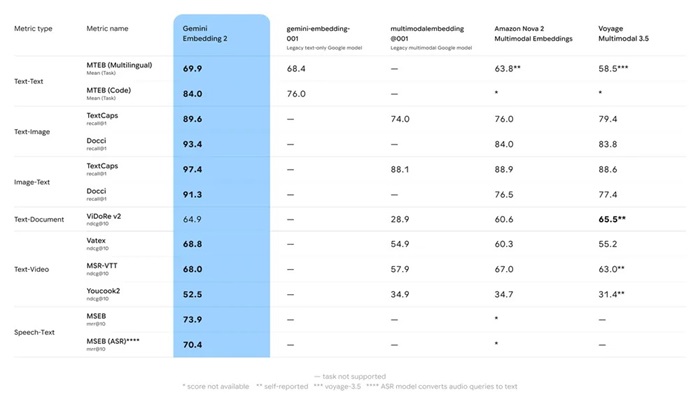

Google says Gemini Embedding 2 enables multimodal embedding across text, images, video, and speech tasks, while introducing native audio processing.

The model is designed for several AI applications, including:

- Retrieval-Augmented Generation (RAG)

- Semantic search

- Sentiment analysis

- Data clustering

- Large-scale knowledge management systems

Availability

Gemini Embedding 2 is currently available in Public Preview through:

- Gemini API

- Vertex AI

Developers can integrate the model with popular AI frameworks and vector database tools such as:

- LangChain

- LlamaIndex

- Haystack

- Weaviate

- Qdrant

- ChromaDB

The model can also be combined with vector search systems to enable advanced multimodal data processing and retrieval.